Michal Tadeáš is the Director of Growth at Mojo, a focus and productivity app that's grown to millions of users. Over the past two years, Mojo has increased ARPU by 60% year-over-year, primarily through pricing experiments. Here, he shares the pricing strategies and experiments that made the biggest impact.

Why Pricing Experiments Work So Well

At Mojo, we've run approximately 40 pricing experiments over the past two years. Our success rate? 56%. That's compared to only 14% for design, copy, and layout tests.

Why are pricing experiments so much more effective? Because pricing is a system, not a single variable. It encompasses:

- Your subscription plan portfolio (what tiers you offer)

- Introductory offers and trial structures

- Psychological pricing elements (anchoring, framing, social proof)

- Local currencies and pricing adjustments

- Promotional strategies

When you optimize this system, the impact on revenue is substantial. A 56% success rate means you're making money moves most of the time.

Subscription Duration: Finding the Right Mix

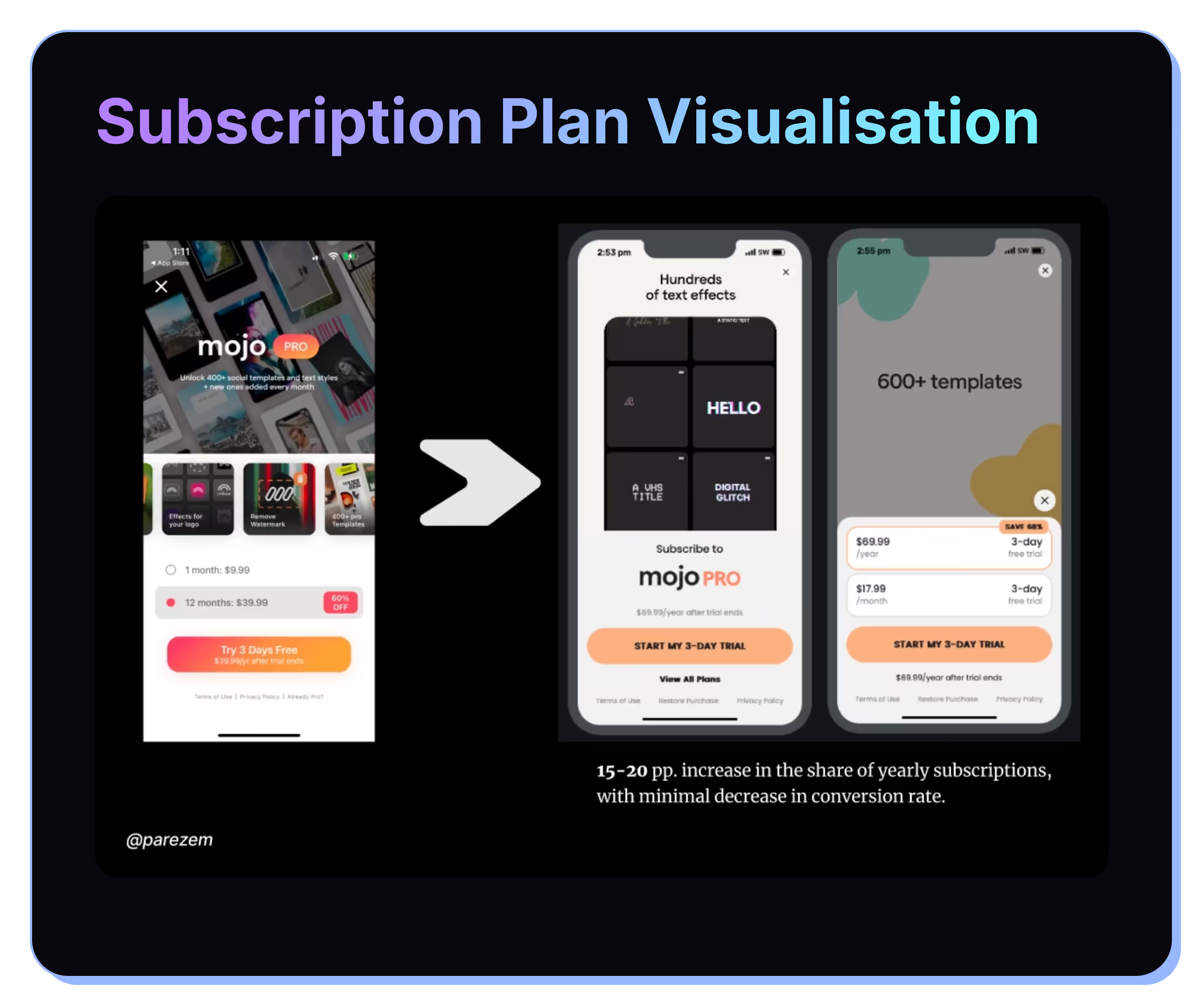

One of the first pricing experiments we ran was on subscription duration. Should users choose weekly, monthly, or yearly plans? What order should they appear in?

We tested various combinations and found that yearly subscriptions consistently drove the highest lifetime value and lowest churn. This makes sense: users who commit to annual plans are more invested in your product.

But here's what surprised us: simply changing the plan order made a difference. When we moved the monthly option behind a "View all plans" button while keeping yearly and weekly visible, conversion to annual plans increased.

The implication? Visual prominence and friction matter. If your most profitable plan is front and center, users are more likely to choose it.

Price Point Testing with Competitor and User Research

Selecting the right price point is both art and science. We use multiple data sources: competitor pricing research, VPN tools to see pricing in different markets, and user reviews to understand price sensitivity.

User reviews are particularly valuable. If users on the App Store are complaining "Great app but too expensive," you have a pricing opportunity. Conversely, if no one mentions price, you might have room to increase.

Don't Be Afraid to Test Lower Prices

Conventional wisdom says you should test higher prices first. But we found that testing lower prices can be just as profitable—sometimes more so.

For example, in Mexico, we tested a 25% price reduction. The result: a 25% lift in new revenue, meaning the volume gain offset the per-unit reduction. Lower prices can expand your addressable market and increase total revenue.

The key is having the data to back it up. Don't just lower prices blindly; measure the impact on conversion, churn, and total revenue.

Balancing Revenue and Retention

When testing prices, there's a natural tension: higher prices increase revenue per user but may hurt retention. The question becomes: what's the optimal price that maximizes long-term revenue?

We use 7-day cancellation rate as a proxy for retention risk. If your cancellation rate spikes after a price increase, you know the price is too aggressive. If cancellation stays flat or decreases, you've likely found a good price point.

Let's say you're testing three price points: $49.99, $69.99, and $89.99. You'll see different conversion rates and different 7-day cancellation rates for each. By projecting revenue over 13 months (accounting for churn), you can see which price point maximizes lifetime revenue.

Often, the highest price point isn't the winner. The sweet spot is usually in the middle—high enough to optimize revenue but not so high that it triggers buyer's remorse and cancellations.

Psychological Pricing: Anchoring and Framing

Psychology plays a huge role in pricing decisions. Two powerful techniques:

Anchoring: Users make decisions relative to a reference point. If you show the yearly price first (e.g., $99.99/year), users perceive monthly ($9.99/month) as cheaper. But if you show monthly first, yearly seems expensive.

Framing: The way you present the price changes perception. "Just $0.33 per day" feels different than "$99.99 per year," even though they're the same price.

A common tactic is to display the yearly plan broken down into monthly costs: "Just $8.33/month when billed annually." This makes the yearly price feel more affordable.

Another powerful anchor is setting the optimal yearly-to-monthly ratio. Should yearly be 10x the monthly price? 7x? 4x? We tested various ratios and found that users respond best when there's a meaningful but not outrageous discount for committing to a year.

Free Trial Strategy: Duration and Conversion

Free trials are one of the most debated monetization decisions. Should you offer a trial? What length?

We tested extensively and found that trials are crucial for new users. They reduce purchase friction and give users time to experience your value. For experienced users (e.g., returning after a long absence), trials matter less.

On trial duration, we tested 3-day vs. 7-day trials. The difference? Minimal. Both drove similar conversion rates and LTV. So unless you have strong evidence that a longer trial drives better outcomes, the shorter duration means faster monetization.

Promotional Strategy: Discounts at Key Moments

We've experimented extensively with discounts, particularly around key moments in the user lifecycle.

Black Friday: Broad discounts during peak shopping seasons work well. But we analyzed the data and found that a slightly lower discount (e.g., 30% off instead of 50%) with strategic targeting (send to non-paying users only) often outperformed blanket discounts.

Targeted discounts: Offering a discount at Day 30 (when churn risk is highest for free trials) increased revenue per user by 87%. This is a critical moment: users who haven't converted by Day 30 are at risk of churning. A strategic discount can save that customer and generate lifetime value.

At Day 90, we tested a 50% discount on annual plans for users who hadn't paid yet. This also drove strong results, capturing users who needed that extra nudge.

The key principle: discounts work best when targeted to high-churn-risk moments and specific user segments, rather than applied broadly.

Conclusion

Pricing isn't a set-it-and-forget-it lever. It's a system that requires continuous testing and iteration. Our 56% success rate on pricing experiments—far higher than other optimization channels—shows just how valuable this work is.

The experiments that moved the needle for Mojo:

- Testing subscription duration and visual prominence

- Systematically testing price points based on competitor and user research

- Running geographic price tests to expand addressable markets

- Using retention metrics to balance revenue and churn

- Leveraging psychological anchoring and framing

- Testing trial length and targeted discounts at high-churn moments

Start with one area—perhaps subscription duration or price points. Run tests, measure the impact on 13-month revenue, and iterate. Over time, these incremental improvements compound into a 60% ARPU increase.